import sys

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

# import seaborn as sns

from keras.datasets import mnist

from keras.utils import to_categorical

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test .astype('float32') / 255.

x_train = np.reshape(x_train, (len(x_train), 28, 28, 1))

x_test = np.reshape(x_test, (len(x_test), 28, 28, 1))

y_train_cat = to_categorical(y_train).astype(np.float32)

y_test_cat = to_categorical(y_test).astype(np.float32)

num_classes = y_test_cat.shape[1]

batch_size = 500

latent_dim = 8

dropout_rate = 0.3

start_lr = 0.001

from keras.layers import Input, Dense

from keras.layers import BatchNormalization, Dropout, Flatten, Reshape, Lambda

from keras.layers import concatenate

from keras.models import Model

from keras.objectives import binary_crossentropy

from keras.layers.advanced_activations import LeakyReLU

from keras import backend as K

def create_cvae():

models = {}

# Добавим Dropout и BatchNormalization

def apply_bn_and_dropout(x):

return Dropout(dropout_rate)(BatchNormalization()(x))

# Энкодер

input_img = Input(shape=(28, 28, 1))

flatten_img = Flatten()(input_img)

input_lbl = Input(shape=(num_classes,), dtype='float32')

x = concatenate([flatten_img, input_lbl])

x = Dense(256, activation='relu')(x)

x = apply_bn_and_dropout(x)

# Предсказываем параметры распределений

# Вместо того чтобы предсказывать стандартное отклонение, предсказываем логарифм вариации

z_mean = Dense(latent_dim)(x)

z_log_var = Dense(latent_dim)(x)

# Сэмплирование из Q с трюком репараметризации

def sampling(args):

z_mean, z_log_var = args

epsilon = K.random_normal(shape=(batch_size, latent_dim), mean=0., stddev=1.0)

return z_mean + K.exp(z_log_var / 2) * epsilon

l = Lambda(sampling, output_shape=(latent_dim,))([z_mean, z_log_var])

models["encoder"] = Model([input_img, input_lbl], l, 'Encoder')

models["z_meaner"] = Model([input_img, input_lbl], z_mean, 'Enc_z_mean')

models["z_lvarer"] = Model([input_img, input_lbl], z_log_var, 'Enc_z_log_var')

# Декодер

z = Input(shape=(latent_dim, ))

input_lbl_d = Input(shape=(num_classes,), dtype='float32')

x = concatenate([z, input_lbl_d])

x = Dense(256)(x)

x = LeakyReLU()(x)

x = apply_bn_and_dropout(x)

x = Dense(28*28, activation='sigmoid')(x)

decoded = Reshape((28, 28, 1))(x)

models["decoder"] = Model([z, input_lbl_d], decoded, name='Decoder')

models["cvae"] = Model([input_img, input_lbl, input_lbl_d],

models["decoder"]([models["encoder"]([input_img, input_lbl]), input_lbl_d]),

name="CVAE")

models["style_t"] = Model([input_img, input_lbl, input_lbl_d],

models["decoder"]([models["z_meaner"]([input_img, input_lbl]), input_lbl_d]),

name="style_transfer")

def vae_loss(x, decoded):

x = K.reshape(x, shape=(batch_size, 28*28))

decoded = K.reshape(decoded, shape=(batch_size, 28*28))

xent_loss = 28*28*binary_crossentropy(x, decoded)

kl_loss = -0.5 * K.sum(1 + z_log_var - K.square(z_mean) - K.exp(z_log_var), axis=-1)

return (xent_loss + kl_loss)/2/28/28

return models, vae_loss

models, vae_loss = create_cvae()

cvae = models["cvae"]

from keras.optimizers import Adam, RMSprop

cvae.compile(optimizer=Adam(start_lr), loss=vae_loss)

digit_size = 28

def plot_digits(*args, invert_colors=False):

args = [x.squeeze() for x in args]

n = min([x.shape[0] for x in args])

figure = np.zeros((digit_size * len(args), digit_size * n))

for i in range(n):

for j in range(len(args)):

figure[j * digit_size: (j + 1) * digit_size,

i * digit_size: (i + 1) * digit_size] = args[j][i].squeeze()

if invert_colors:

figure = 1-figure

plt.figure(figsize=(2*n, 2*len(args)))

plt.imshow(figure, cmap='Greys_r')

plt.grid(False)

ax = plt.gca()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.show()

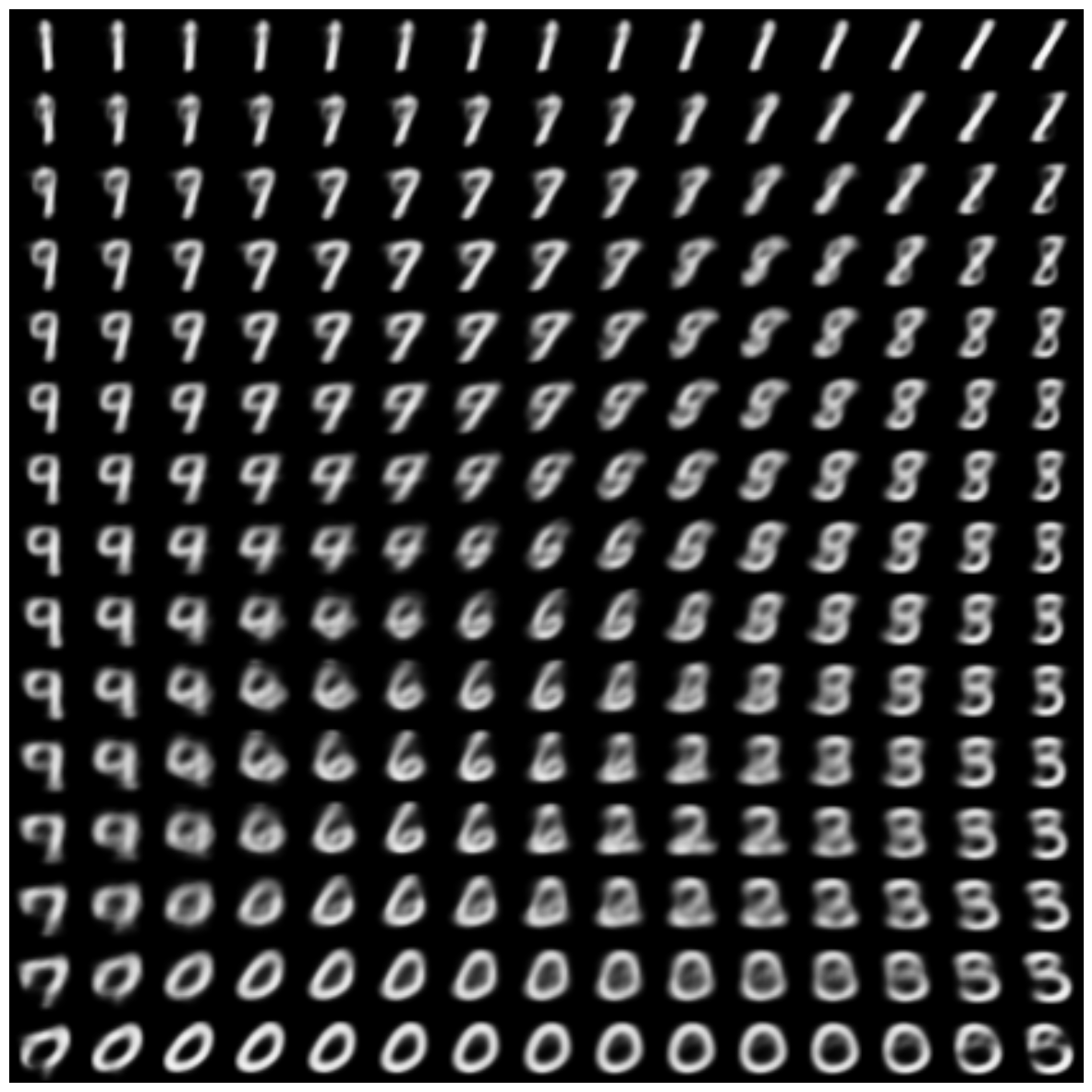

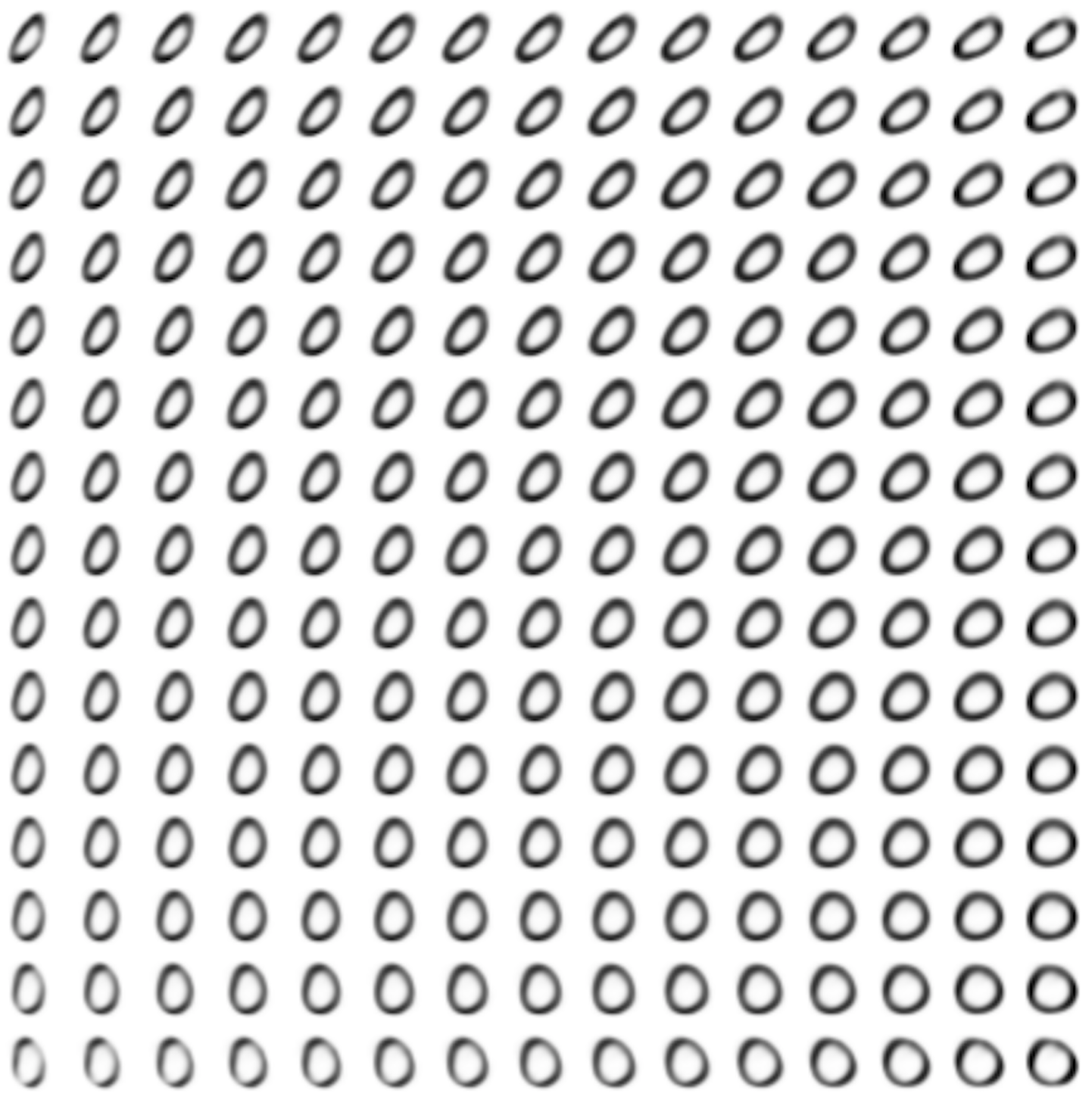

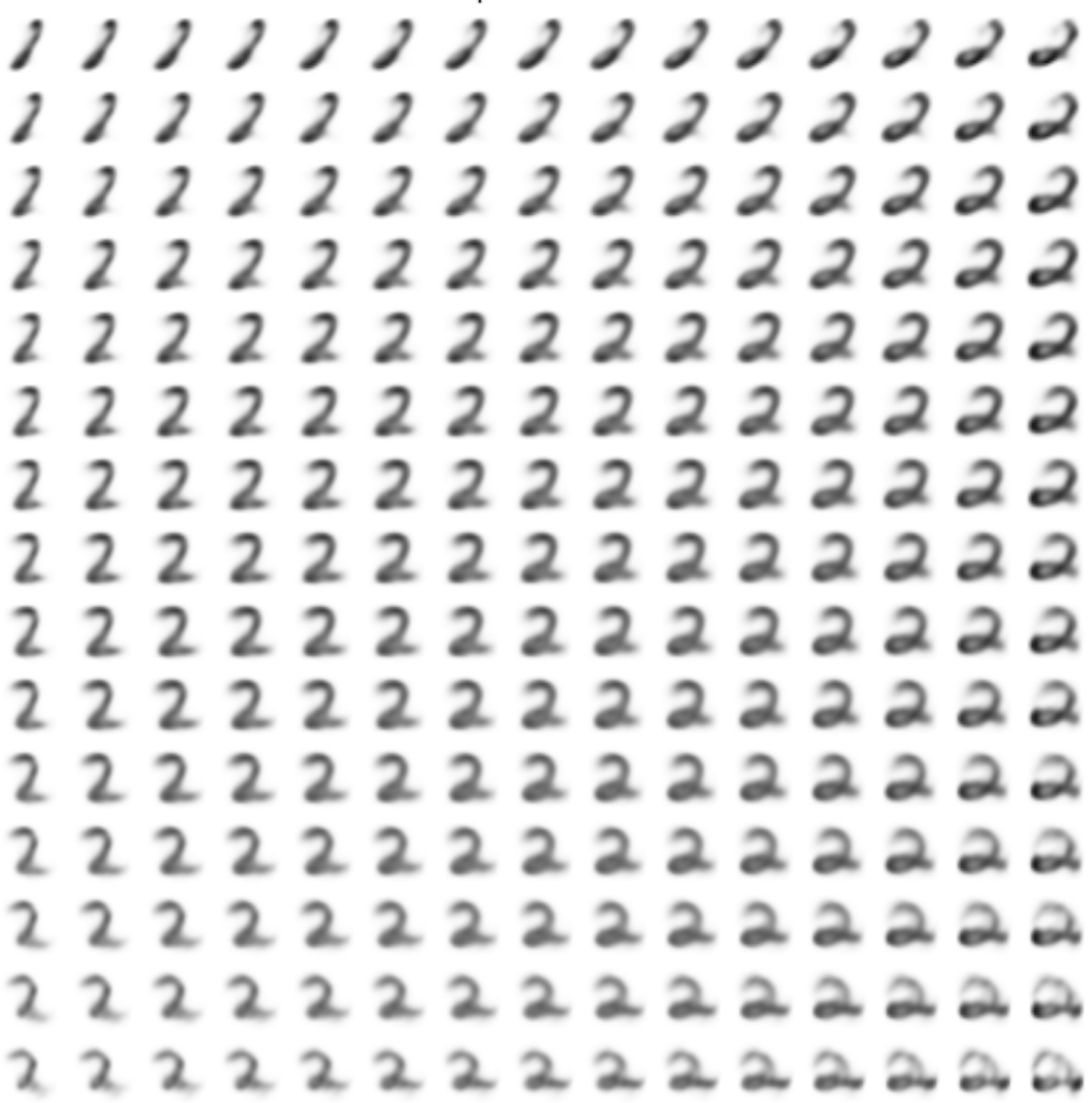

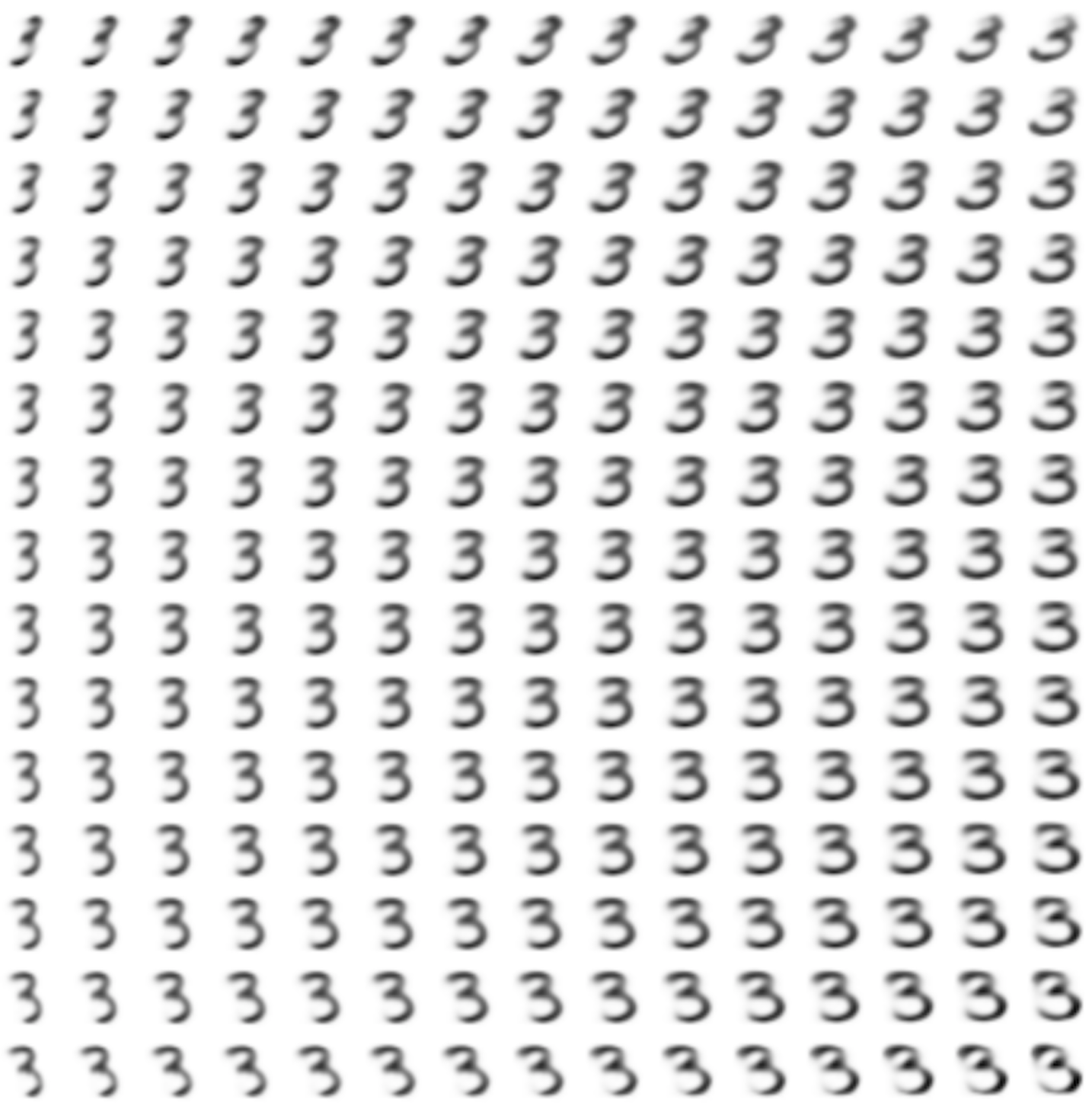

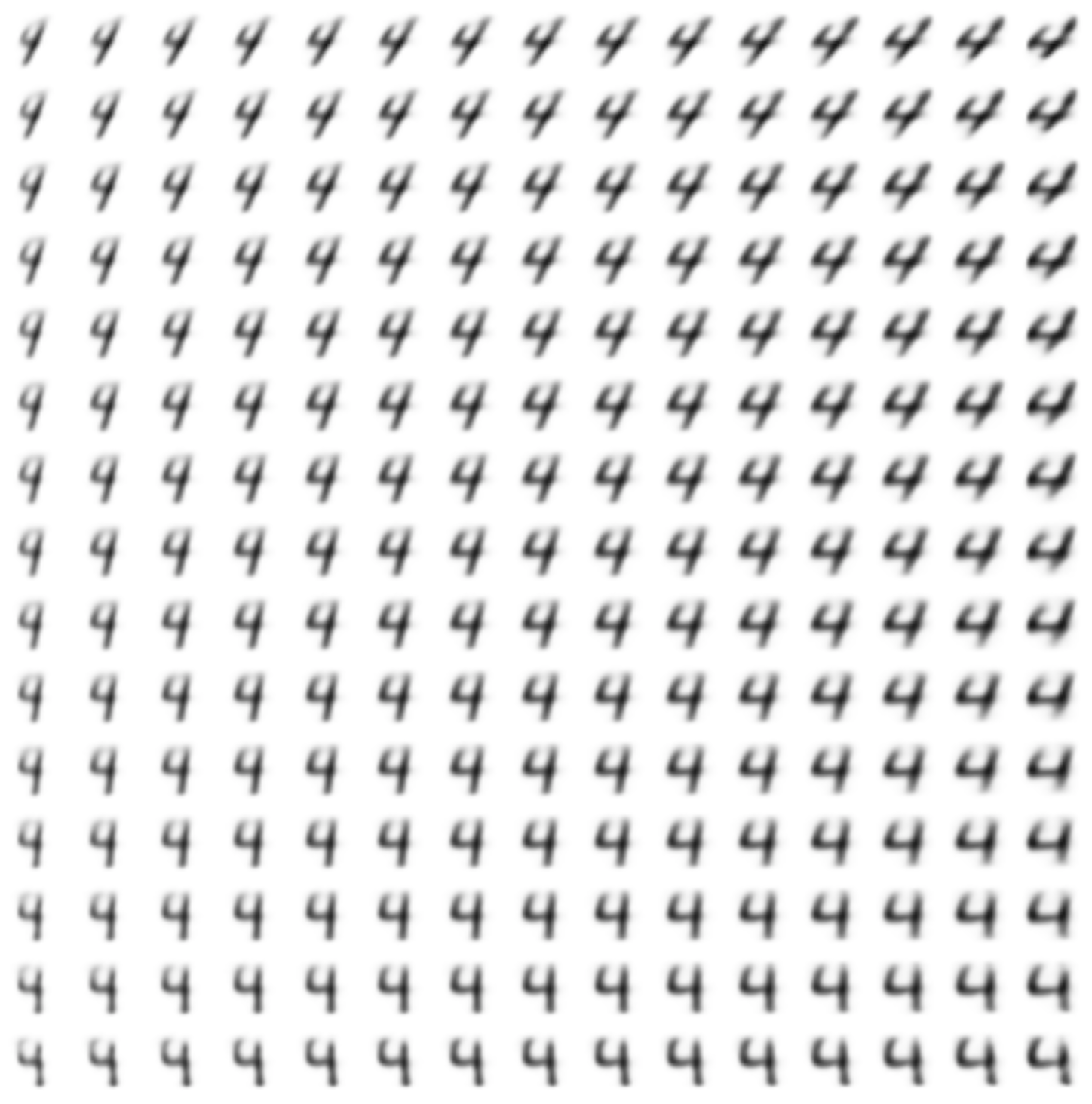

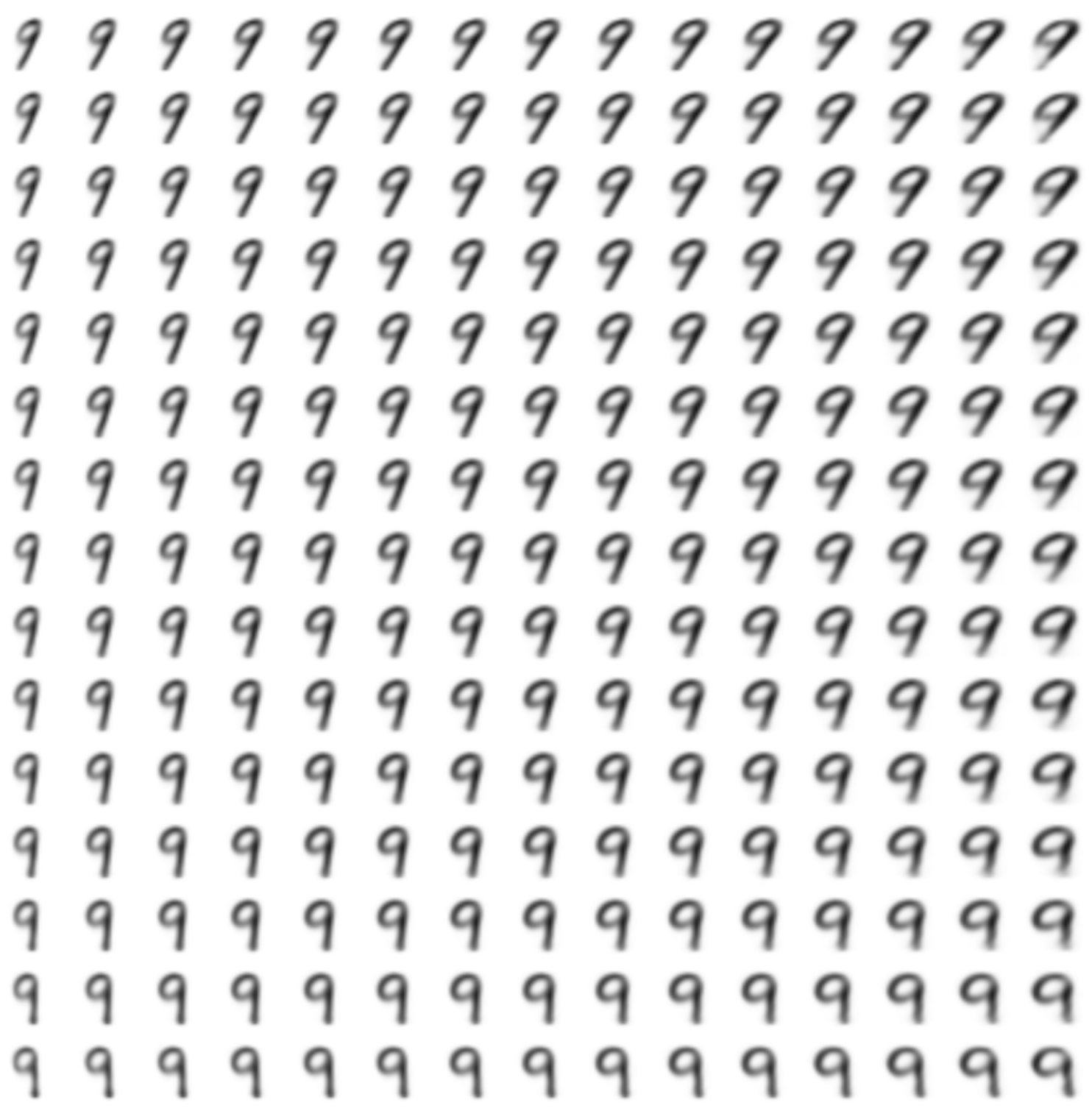

n = 15 # Картинка с 15x15 цифр

from scipy.stats import norm

# Так как сэмплируем из N(0, I), то сетку узлов, в которых генерируем цифры, берем из обратной функции распределения

grid_x = norm.ppf(np.linspace(0.05, 0.95, n))

grid_y = norm.ppf(np.linspace(0.05, 0.95, n))

def draw_manifold(generator, lbl, show=True):

# Рисование цифр из многообразия

figure = np.zeros((digit_size * n, digit_size * n))

input_lbl = np.zeros((1, 10))

input_lbl[0, lbl] = 1

for i, yi in enumerate(grid_x):

for j, xi in enumerate(grid_y):

z_sample = np.zeros((1, latent_dim))

z_sample[:, :2] = np.array([[xi, yi]])

x_decoded = generator.predict([z_sample, input_lbl])

digit = x_decoded[0].squeeze()

figure[i * digit_size: (i + 1) * digit_size,

j * digit_size: (j + 1) * digit_size] = digit

if show:

# Визуализация

plt.figure(figsize=(10, 10))

plt.imshow(figure, cmap='Greys_r')

plt.grid(False)

ax = plt.gca()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.show()

return figure

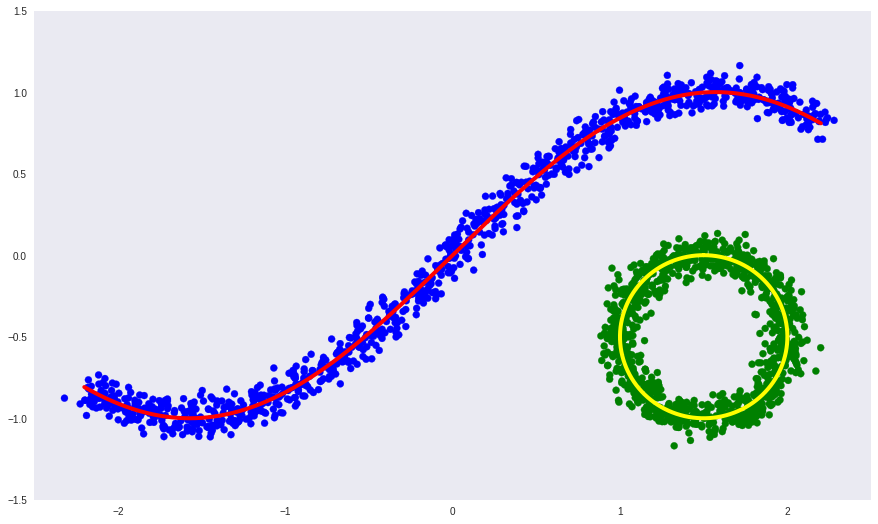

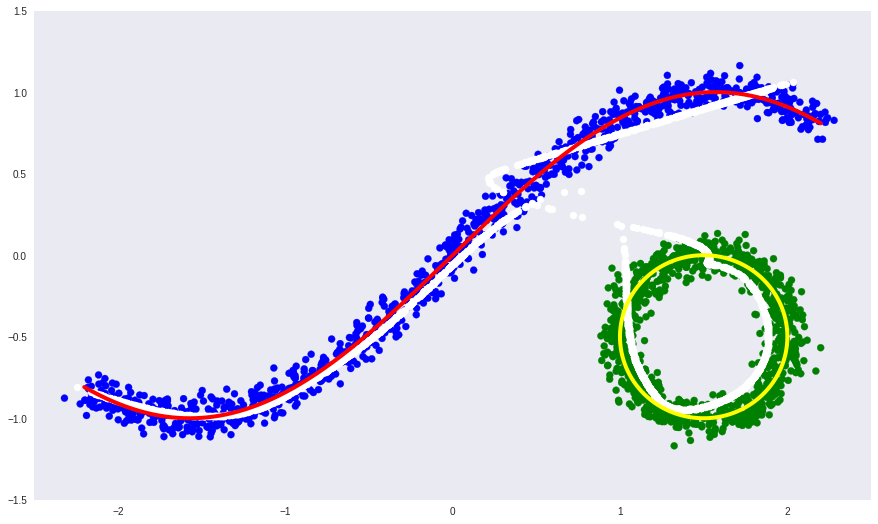

def draw_z_distr(z_predicted, lbl):

# Рисование рпспределения z

input_lbl = np.zeros((1, 10))

input_lbl[0, lbl] = 1

im = plt.scatter(z_predicted[:, 0], z_predicted[:, 1])

im.axes.set_xlim(-5, 5)

im.axes.set_ylim(-5, 5)

plt.show()

from IPython.display import clear_output

from keras.callbacks import LambdaCallback, ReduceLROnPlateau, TensorBoard

# Массивы, в которые будем сохранять результаты для последующей визуализации

figs = [[] for x in range(num_classes)]

latent_distrs = [[] for x in range(num_classes)]

epochs = []

# Эпохи, в которые будем сохранять

save_epochs = set(list((np.arange(0, 59)**1.701).astype(np.int)) + list(range(10)))

# Отслеживать будем на вот этих цифрах

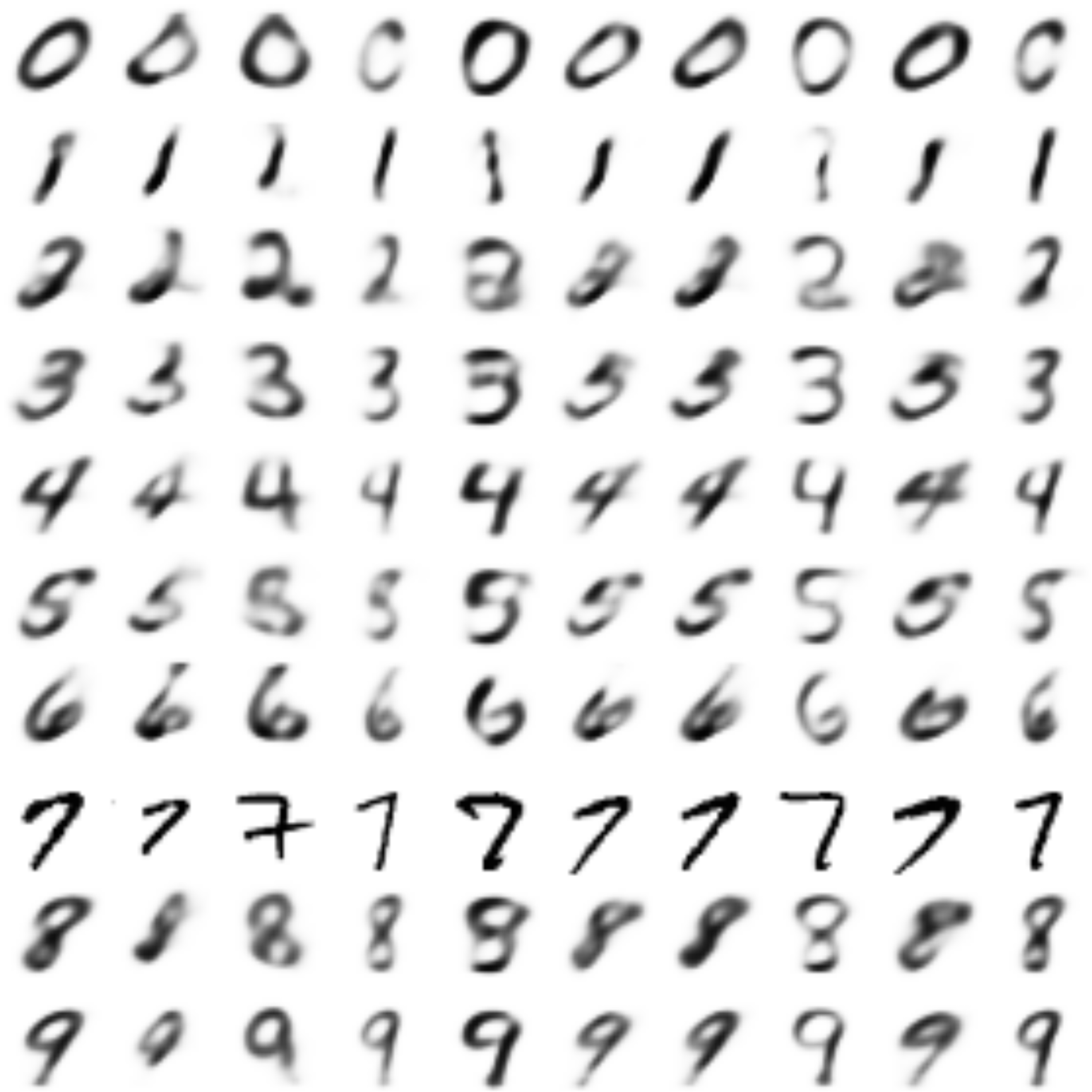

imgs = x_test[:batch_size]

imgs_lbls = y_test_cat[:batch_size]

n_compare = 10

# Модели

generator = models["decoder"]

encoder_mean = models["z_meaner"]

# Функция, которую будем запускать после каждой эпохи

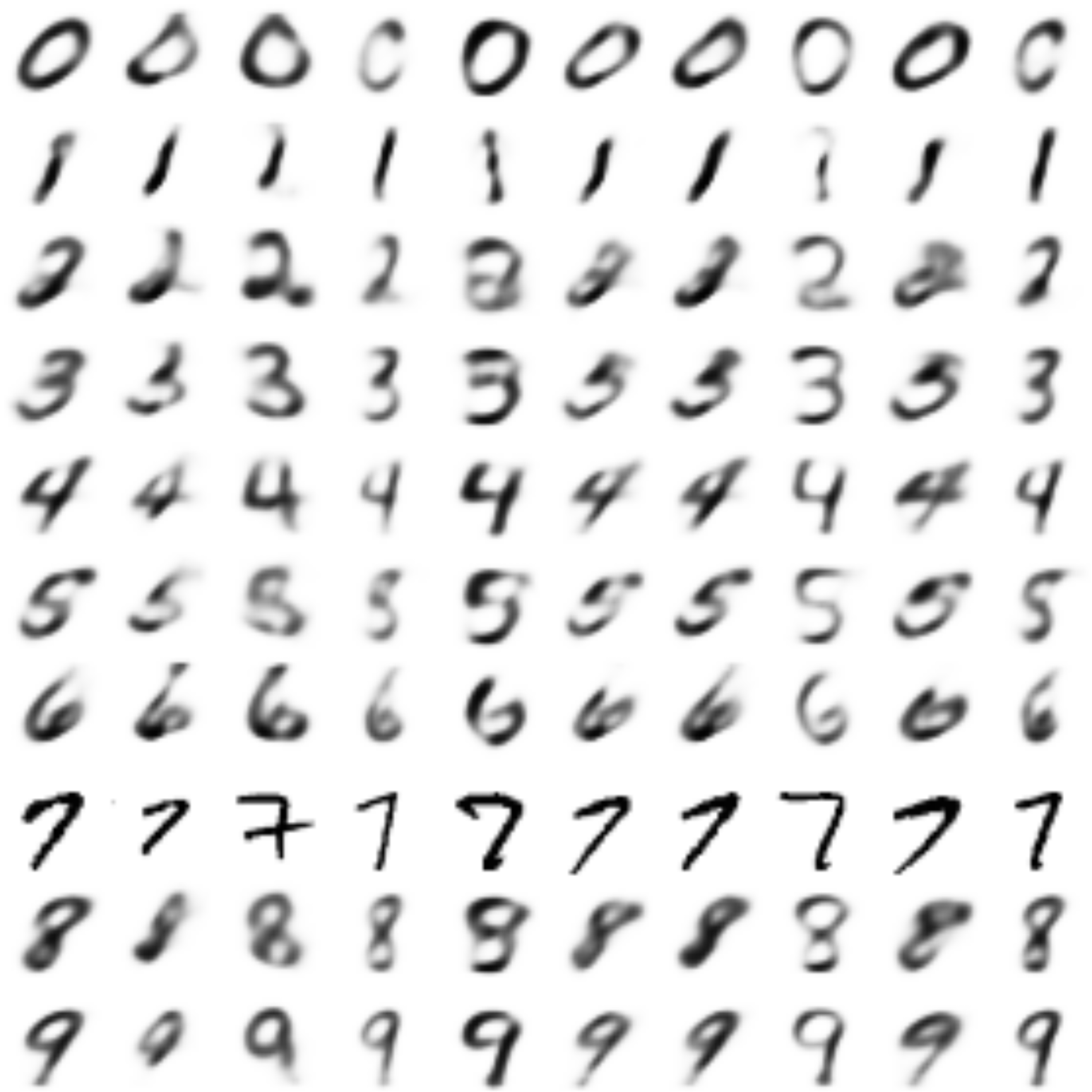

def on_epoch_end(epoch, logs):

if epoch in save_epochs:

clear_output() # Не захламляем output

# Сравнение реальных и декодированных цифр

decoded = cvae.predict([imgs, imgs_lbls, imgs_lbls], batch_size=batch_size)

plot_digits(imgs[:n_compare], decoded[:n_compare])

# Рисование многообразия для рандомного y и распределения z|y

draw_lbl = np.random.randint(0, num_classes)

print(draw_lbl)

for lbl in range(num_classes):

figs[lbl].append(draw_manifold(generator, lbl, show=lbl==draw_lbl))

idxs = y_test == lbl

z_predicted = encoder_mean.predict([x_test[idxs], y_test_cat[idxs]], batch_size)

latent_distrs[lbl].append(z_predicted)

if lbl==draw_lbl:

draw_z_distr(z_predicted, lbl)

epochs.append(epoch)

# Коллбэки

pltfig = LambdaCallback(on_epoch_end=on_epoch_end)

# lr_red = ReduceLROnPlateau(factor=0.1, patience=25)

tb = TensorBoard(log_dir='./logs')

# Запуск обучения

cvae.fit([x_train, y_train_cat, y_train_cat], x_train, shuffle=True, epochs=1000,

batch_size=batch_size,

validation_data=([x_test, y_test_cat, y_test_cat], x_test),

callbacks=[pltfig, tb],

verbose=1)